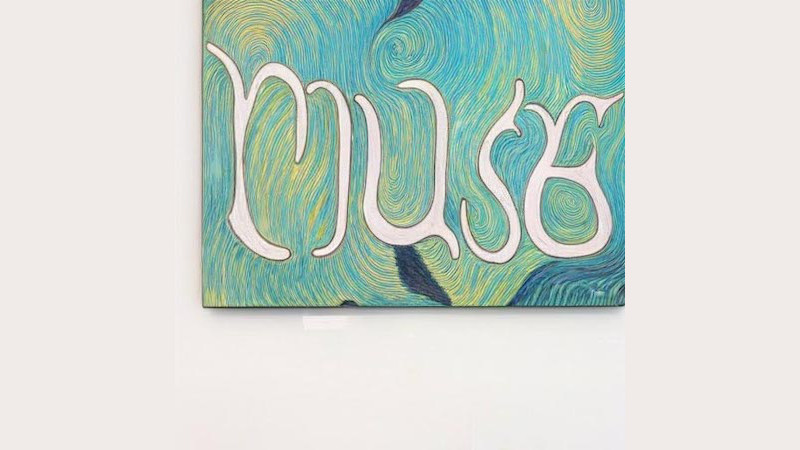

With “Muse”, Google has developed its own artificial intelligence that generates images from text. The search engine giant wants to outdo the competition for Dall-E in particular. The Google AI Muse convinces with a high resolution and fast generation time.

The past two years in particular have shown how rapidly the topic of artificial intelligence is progressing. Because computers can now generate works of art that could also come from human hands. At the same time, ChatGPT demonstrated that AI texts can hardly be distinguished from human ones.

However, the technology still has problems. For example, artificial intelligence generated historical research reports on bears in space. But what sounds funny at first can quickly pose a threat.

Muse: Google presents its own AI model as a text-to-image generator

Because the boundaries between truth and lies are becoming increasingly blurred. Nevertheless, the AI trend seems unstoppable. Google, for example, wants to stir up the market with its artificial intelligence “Muse”, a text-to-image generator. Meanwhile, the AI model focuses on the transfer of text to images and works in a similar way to existing systems.

The program creates corresponding images by entering text. The advantage over other models: efficiency. Muse shouldn’t need a lot of computing power. This is made possible by a new approach with which image information is no longer stored in pixels, but on the basis of so-called tokens. These are character strings that contain all the details.

Google: Abuse potential still too high

The training of artificial intelligence still works the same way. The algorithm receives images with appropriate subtitles and then encodes the information in its own digital “brain”. Then, when users request an image of a dog wearing sunglasses, Muse decodes and merges the information about both objects.

In the end, an artificially generated image is created in extremely high resolution. It is still unclear when the system will be available to the public. Google says that the potential for abuse is still too high and that they are therefore not publishing it. In addition, the system is probably not yet clear with long texts or numbers.

Also interesting:

Source: https://www.basicthinking.de/blog/2023/01/20/google-muse-ki-text-zu-bild/